Reproducible research¶

The problems/challenges outlined in the previous section can be grouped into three categories:

- computational resources: the need to handle large data volumes and long computation times;

- validation: a need for new, well-validated analysis methods;

- reproducibility: due to the workflow complexity, it can be difficult for others (or yourself in a few months time) to reproduce the analysis.

In this section we will focus on the third problem: reproducibility.

Foundations of science¶

Reproducibility is one of the foundation stones of the scientific method. From this follows the obligation for scientists to include enough information in their publications to enable others to independently reproduce the finding.

But how much information is enough information?

There is increasing concern that for science that relies on computation (i.e. almost all of current science), traditional publishing methods do not allow enough information to be included, making independent reproduction either extremely difficult or impossible.

What is worse, in many cases scientists cannot even reproduce their own results, leading to what some are calling a “credibility crisis” for computation-based research.

The credibility crisis¶

Climategate¶

In November 2009 a server at the Climatic Research Unit at the University of East Anglia was hacked, and several thousand e-mails and other files were copied and distributed on the Web.

Among the files was one called “HARRY_READ_ME.txt”, a 15000-line file describing the odyssey of a researcher at the CRU trying (with limited success) to reproduce some of the results previously published by the Unit, based on 11000 files from two old filesystems.

The protein structure story¶

In December 2006, Geoffrey Chang, a crystallographer at the Scripps Institute, retracted 3 Science articles, a Nature article, a PNAS article and a J. Mol. Biol. article. The retraction states:

“An in-house data reduction program introduced a change in sign for anomalous differences. This program, which was not part of a conventional data processing package, converted the anomalous pairs (I+ and I-) to (F- and F+), thereby introducing a sign change. As the diffraction data collected for each set of MsbA crystals and for the EmrE crystals were processed with the same program, the structures reported ... had the wrong hand.

...

We very sincerely regret the confusion that these papers have caused and, in particular, subsequent research efforts that were unproductive as a result of our original findings.”

Apparently, the in-house program was “legacy software inherited from a neighboring lab”.

A note on terminology: reproduction, replication and reuse¶

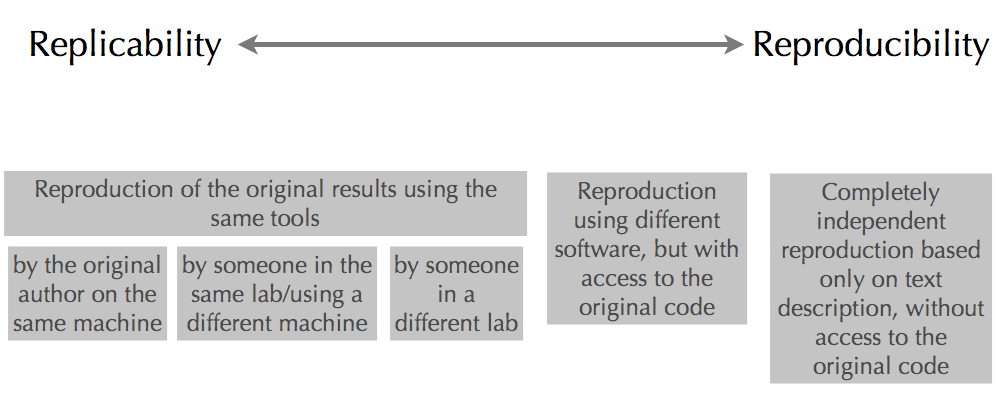

The term “reproducibility” covers a wide range of activities, from a completely independent repeat of the experiment, using only the “Materials and Methods” section of the article, with no access to the original authors’ source code, to the original scientist re-running the same code on the same machine. Some of the other points on the spectrum are illustrated in the following figure:

Completely independent reproduction is of course the gold standard. Some commentators have expressed the opinion that time or effort spent on making other points on the spectrum (sometimes described as “replication” rather than “reproduction”) easier is either wasted, or worse, counterproductive.

This point of view tends to suppose that “mere” replication is simple, which is generally not the case, and ignores:

- the case where there are discrepancies between what is stated in the Material and Methods and what is in the original code;

- the case where there is not enough information in the article to reproduce the experiment;

- the considerable benefits of code reuse.

What makes it hard to replicate your own research?¶

“I thought I used the same parameters but I’m getting different results”

“I can’t remember which version of the code I used to generate figure 6”

“The new student wants to reuse that model I published three years ago but he can’t reproduce the figures”

“It worked yesterday”

“Why did I do that?”

None of these are real quotes, but they distill a lot of similar laments I’ve heard from myself and colleagues. When I give talks about reproducible research I often show these quotes, and they always elicit laughter and rueful smiles of recognition, irrespective of the scientific specialty of the audience members.

It is a common experience among computational scientists that replicating our own results, especially with the passage of time (but sometimes only hours or days later), is not always easy, and sometimes impossible.

Why is that?

I think we can identify three general classes of reasons:

- Complexity

- As we tackle more and more challenging problems (due to the progress of science and due to Moore’s Law, which allows us to buy more and more powerful hardware), the complexity of our code and computing environment tends to increase. This can lead to an excessive dependence of our results on small details and a situation where small changes have big effects.

- Entropy

- both our computing environment (hardware, operating system, compilers, ...) and the versions of libraries that we use in our code change over time. Every time you upgrade your operating system or buy a new computer, the likelihood that you can replicate your old results goes down.

- Memory limitations

- By this I mean human, not computer memory limitations. Conscientious scientists write down the details of what they do in their lab notebooks, but often there are so many details that it’s not possible to note them all, or things which seem implicit and obvious at the time are not written down and are later forgetten.

What can we do about it?

That is the point of this tutorial.

What makes it hard to reproduce other people’s research?¶

Here again we need to distinguish between independent reproduction based only on the published article without access to the original source code, and replication/ reimplementation with access to the code.

In the former case, the main barrier is inadequate (incorrect or incomplete) descriptions of the model and/or analysis procedure in the paper, including supplementary material. Nordlie et al. (2009) [1] propose “good model description practice” for computational neuroscience papers which aims at ensuring model descriptions are sufficiently complete for reproduction.

Where the code is available, the difficulties are essentially the same as when trying to replicate your own results from your own code, but more acute:

- you don’t have either the lab notebooks or the implicit knowledge of the original authors in trying to understand the code;

- you may not have access to all of the libraries/tools used by the original authors (where these are in-house or expensive commercial software).

Aims of this tutorial¶

I hope I have convinced you, if you were unconvinced already, that reproducibility of computational research is:

- important;

- often not easy to achieve (or not as easy as it should be).

In this tutorial I will focus on replicability, in the sense defined above, rather than independent reproducibility, i.e. on being able to replicate your own results, or those of your students, postdocs or collaborators, weeks, months or years later.

Even if you choose not to share your code with others (I think you should, both for the reasons of credibility outlined above and because of anecdotal evidence that sharing code increases citations of your papers, but I understand that people do have fears about doing so), investing the effort to improve replicability of your results will pay dividends:

- when you have to modify and regenerate figures in response to reviewers’ comments;

- when a student graduates and leaves the lab;

- when you want to start a new project that builds on existing work;

- and it could save you from painful retractions

- or from crucifiction in the media!

This tutorial will therefore expose you to, or remind you about, tools that can help to address the problems of complexity, entropy and memory limitations identified above, and so help to answer the question “How can we make it easier to reproduce our own research?”

| [1] | Nordlie E., Gewaltig M.-O. and Plesser H.E. (2009) PLoS Comp Biol 5(8): e1000456. doi:10.1371/journal.pcbi.1000456 |